from bioMONAI.data import *

from bioMONAI.transforms import *

from bioMONAI.core import *

from bioMONAI.core import Path

from bioMONAI.data import get_images, get_target, RandomSplitter

from bioMONAI.losses import *

from bioMONAI.losses import SSIMLoss

from bioMONAI.metrics import *

from bioMONAI.datasets import download_fileHistopathology

Setup imports

import warnings

warnings.filterwarnings("ignore")device = get_device()

print(device)cudaDownload Data

In the next cell, we will download the dataset required for this tutorial. The dataset is hosted online, and we will use the download_file function from the bioMONAI library to download and extract the files.

- You can change the

output_directoryvariable to specify a different directory where you want to save the downloaded files.- The

urlvariable contains the link to the dataset. If you have a different dataset, you can replace this URL with the link to your dataset.- By default, we are downloading only the first two images. You can modify the code to download more images if needed.

Make sure you have enough storage space in the specified directory before downloading the dataset.

# Specify the directory where you want to save the downloaded files

output_directory = "../_data/TNBC_NucleiSegmentation"

# Define the base URL for the dataset

url = 'https://zenodo.org/records/2579118/files/TNBC_NucleiSegmentation.zip'

# Download only the first two images

download_file(url, output_directory, extract=True, extract_dir='.', hash='014bc1e08d6459be5508620ad219063a45179a1767b7caf84d64245d7f6cc5a3')The file has been downloaded and saved to: /home/biagio/bioMONAI/nbs/_data/TNBC_NucleiSegmentationPrepare Data for Training

In the next cell, we will prepare the data for training. We will specify the path to the training images and define the batch size and patch size. Additionally, we will apply several transformations to the images to augment the dataset and improve the model’s robustness.

X_path: The path to the directory containing the low-resolution training images.bs: The batch size, which determines the number of images processed together in one iteration.patch_size: The size of the patches to be extracted from the images.itemTfms: A list of item-level transformations applied to each image, including random cropping, rotation, and flipping.batchTfms: A list of batch-level transformations applied to each batch of images, including intensity scaling.get_target_fn: A function to get the corresponding ground truth images for the low-resolution images.

You can customize the following parameters to suit your needs: - Change the

X_pathvariable to point to a different dataset. - Adjust thebsandpatch_sizevariables to match your hardware capabilities and model requirements. - Modify the transformations initemTfmsandbatchTfmsto include other augmentations or preprocessing steps.

After defining these parameters and transformations, we will create a BioDataLoaders object to load the training and validation datasets.

X_path = Path(output_directory)/'TNBC_dataset'

img_paths = get_images(X_path,'Slide*')

# create a function to get the target path from the image path

get_target_fn = get_target('GT', same_filename=True, relative_path=True, map_foldername=True, target_folder_prefix="GT", signal_folder_prefix="Slide")

print('input:', img_paths[4], '\ntarget:', get_target_fn(img_paths[4]))input: ../_data/TNBC_NucleiSegmentation/TNBC_dataset/Slide_07/07_2.png

target: ../_data/TNBC_NucleiSegmentation/TNBC_dataset/GT_07/07_2.pnggt_paths = [get_target_fn(img_paths[i]) for i in range(len(img_paths))]patch_size = (4, 128, 128)

overlap = 0.5

# save_patches_grid(img_paths, gt_paths, output_directory, patch_size, overlap)n_channels = 1

data_ops = {

'fn_col': ['path_signal'],

'target_col': ['path_target'],

'valid_col': ['is_valid'],

'seed': 42,

'bs': 16,

'img_cls': BioImageMulti,

'target_img_cls': BioImage, # class for target images

'item_tfms': [CropND(slices=[(0,n_channels)], dims=[0]), # crop channels

RandRot90(prob=.75),

RandFlip(prob=0.75)],

'batch_tfms': [ScaleIntensityPercentiles()], # batch transformations

}

data = BioDataLoaders.from_csv(

'',

output_directory + '/patches_train.csv',

show_summary=False,

**data_ops,

)

# print length of training and validation datasets

print('train images:', len(data.train_ds.items), '\nvalidation images:', len(data.valid_ds.items))train images: 1611

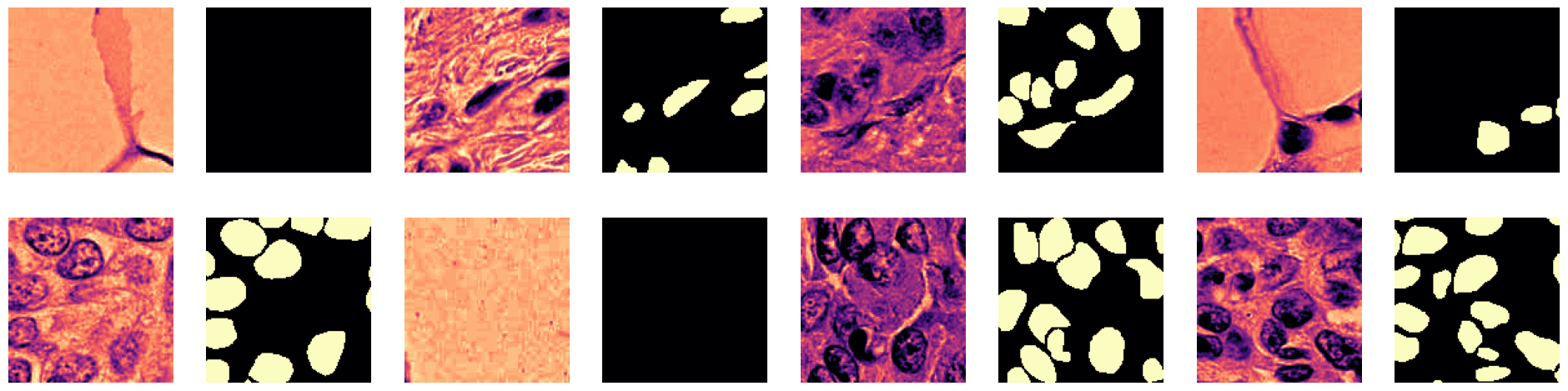

validation images: 231Visualize a Batch of Training Data

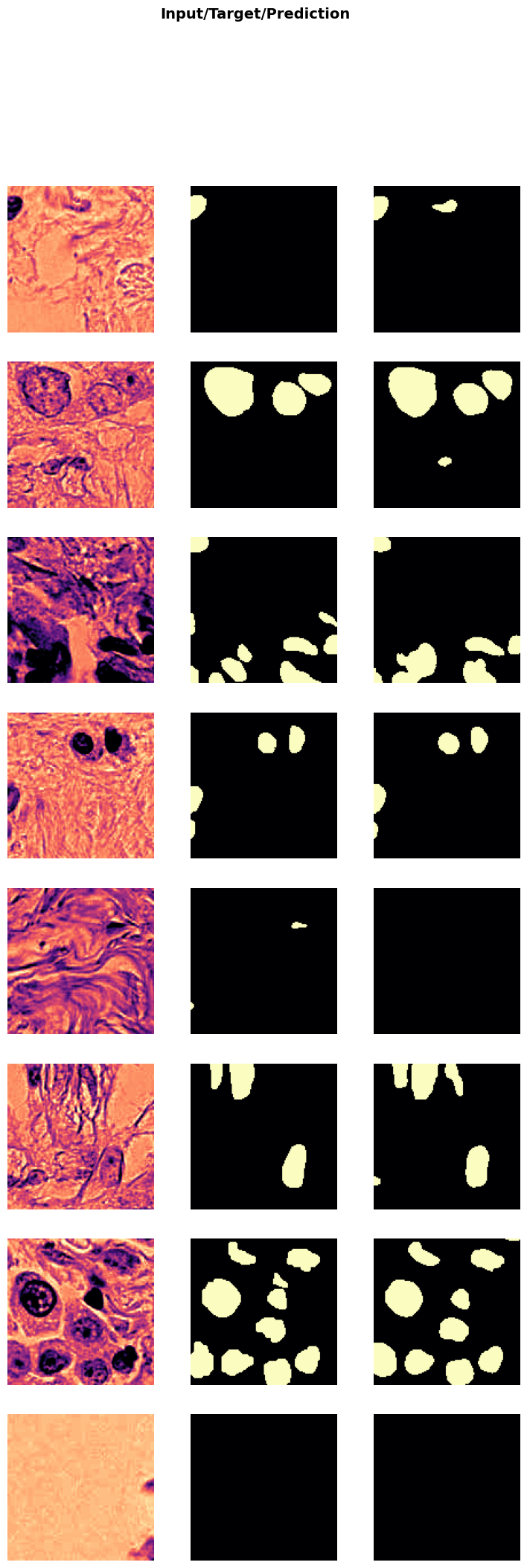

In the next cell, we will visualize a batch of training data to get an idea of what the images look like after applying the transformations. This step is crucial to ensure that the data augmentation and preprocessing steps are working as expected.

data.show_batch(cmap='magma'): This function will display a batch of images from the training dataset using the ‘magma’ colormap.

Change the

cmapparameter to use a different colormap (e.g., ‘gray’, ‘viridis’, ‘plasma’) based on your preference.

Visualizing the data helps in understanding the dataset better and ensures that the transformations are applied correctly.

data.show_batch(cmap='magma')

Define and Train the Model

# from monai.networks.nets import UNETR

from bioMONAI.nets import create_unet_model, resnet34

from fastai.vision.all import xresnet50

# model = UNETR(in_channels=n_channels, out_channels=1, img_size=patch_size[1:], feature_size=32, norm_name='batch', spatial_dims=2)

model = create_unet_model(resnet34, 1, patch_size[1:], True, n_in=n_channels, cut=None, blur_final=True, self_attention=False)from fastai.vision.all import BCEWithLogitsLossFlat

loss = BCEWithLogitsLossFlat()

metrics = [MSEMetric(), MAEMetric(), SSIMMetric(2)]

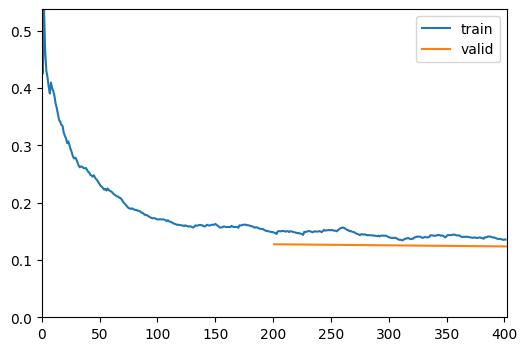

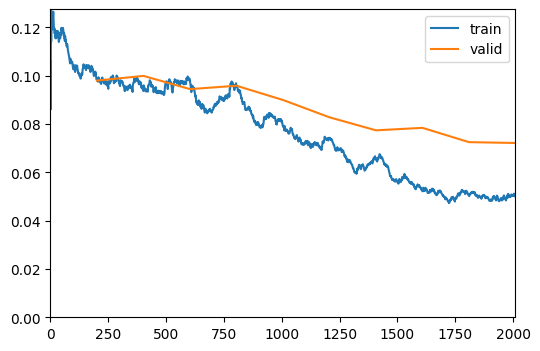

trainer = fastTrainer(data, model, loss_fn=loss, metrics=metrics, show_summary=False)# trainer.fit_one_cycle(50, 1e-3)

trainer.fine_tune(200, freeze_epochs=2)| epoch | train_loss | valid_loss | MSE | MAE | SSIM | time |

|---|---|---|---|---|---|---|

| 0 | 0.148481 | 0.127386 | 29.080843 | 4.695947 | 0.004185 | 00:10 |

| 1 | 0.135516 | 0.123581 | 134.304932 | 9.862062 | 0.000000 | 00:09 |

IOStream.flush timed out

| epoch | train_loss | valid_loss | MSE | MAE | SSIM | time |

|---|---|---|---|---|---|---|

| 0 | 0.098401 | 0.097984 | 62.700428 | 7.043931 | 0.000729 | 00:10 |

| 1 | 0.093862 | 0.099934 | 63.443249 | 7.222431 | 0.001859 | 00:09 |

| 2 | 0.098112 | 0.094457 | 46.674049 | 6.179467 | 0.002166 | 00:09 |

| 3 | 0.096176 | 0.095853 | 68.515465 | 6.860152 | 0.003357 | 00:09 |

| 4 | 0.079372 | 0.089962 | 82.459068 | 8.111735 | 0.003615 | 00:09 |

| 5 | 0.073969 | 0.082787 | 59.123199 | 6.919718 | 0.000832 | 00:09 |

| 6 | 0.065842 | 0.077395 | 105.465813 | 9.070299 | 0.001098 | 00:08 |

| 7 | 0.052349 | 0.078422 | 204.180008 | 12.449563 | 0.002380 | 00:08 |

| 8 | 0.050645 | 0.072509 | 174.056458 | 11.461279 | 0.001694 | 00:08 |

| 9 | 0.050052 | 0.072150 | 192.433090 | 12.021430 | 0.002014 | 00:08 |

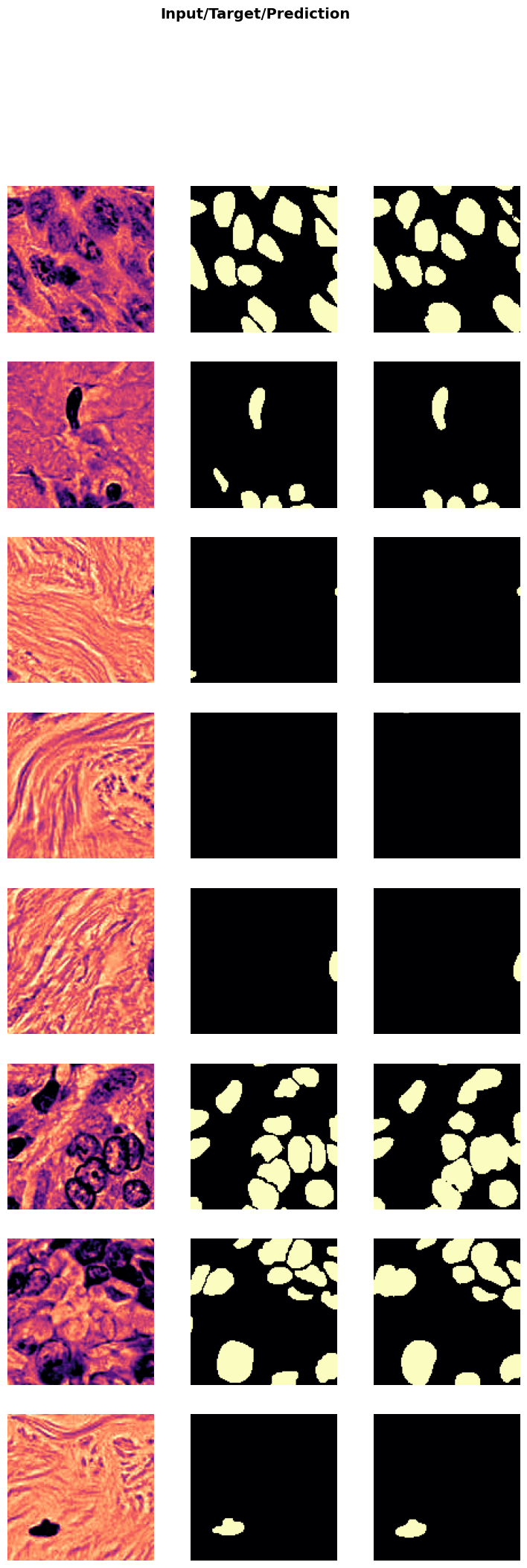

Show Results

In the next cell, we will visualize the results of the trained model on a batch of validation data. This step helps in understanding how well the model has learned to denoise the images.

trainer.show_results(cmap='magma'): This function will display a batch of images from the validation dataset along with their corresponding denoised outputs using the ‘magma’ colormap.

Visualizing the results helps in assessing the performance of the model and identifying any areas that may need further improvement.

trainer.show_results(cmap='magma')

Save the Trained Model

In the next cell, we will save the trained model to a file. This step is crucial to preserve the model’s weights and architecture, allowing you to load and use the model later without retraining it.

trainer.save('tmp-model'): This function saves the model to a file named ‘tmp-model’. You can change the filename to something more descriptive based on your project.

Suggestions for customization: - Change the filename to include details like the model architecture, dataset, or date (e.g., ‘unet_resnet34_U2OS_2023’). - Save the model in a specific directory by providing the full path (e.g., ‘models/unet_resnet34_U2OS_2023’). - Save additional information like training history, metrics, or configuration settings in a separate file for better reproducibility.

Saving the model ensures that you can easily share it with others or deploy it in a production environment without needing to retrain it.

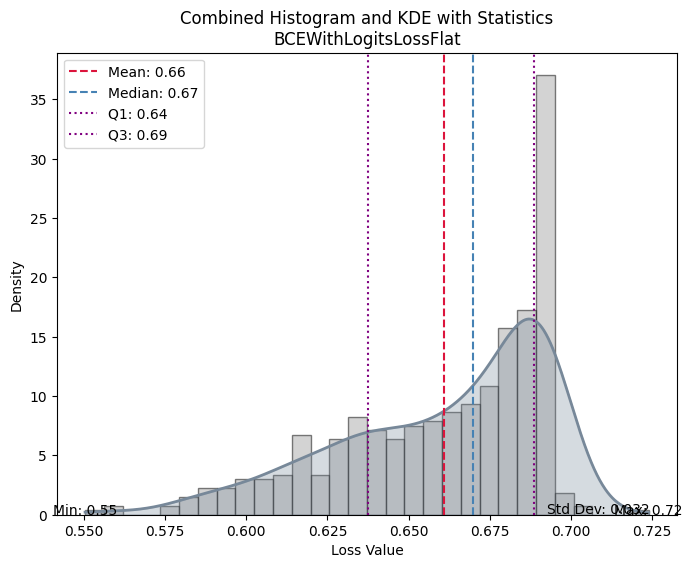

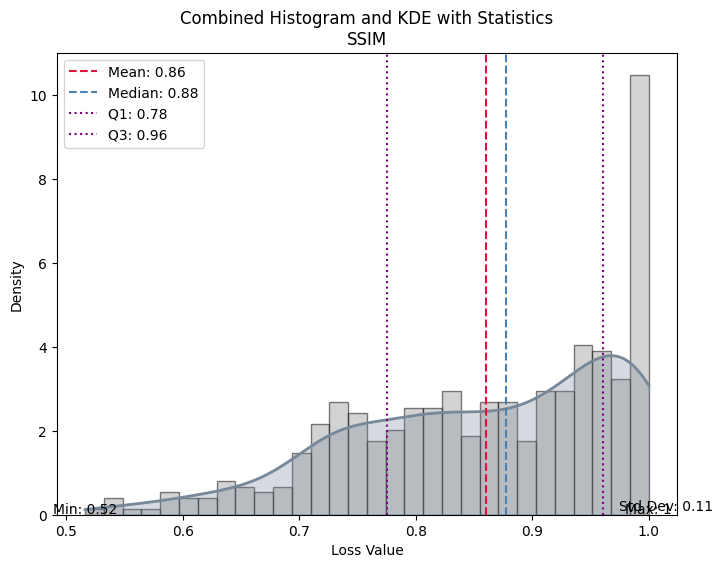

trainer.save('905-single-channel-model')Path('models/905-single-channel-model.pth')Evaluate the Model on Test Data

In the next cell, we will evaluate the performance of the trained model on unseen test data. This step is crucial to get an unbiased evaluation of the model’s performance and understand how well it generalizes to new data.

test_X_path: The path to the directory containing the low-resolution test images.test_data: ADataLoaderobject created from the test images.evaluate_model(trainer, test_data, metrics=SSIMMetric(2)): This function evaluates the model on the test dataset using the specified metrics (in this case, SSIM).

Suggestions for customization: - Change the

test_X_pathvariable to point to a different test dataset. - Add more metrics to themetricsparameter to get a comprehensive evaluation (e.g.,MSEMetric(),MAEMetric()). - Save the evaluation results to a file for further analysis or reporting.

Evaluating the model on test data helps in understanding its performance in real-world scenarios and identifying any areas that may need further improvement.

# import pandas as pd

# test_csv_path = output_directory + '/patches_test.csv'

# df = pd.read_csv(test_csv_path, header='infer', delimiter=None, quoting=0)

# test_data = data.test_dl(df, with_labels=True)

test_data = test_biodataloader(data, output_directory + '/patches_test.csv')

# print length of test dataset

print('test images:', len(test_data.items))

evaluate_model(trainer, test_data, metrics=SSIMMetric(2));test images: 461

| Value | |

|---|---|

| BCEWithLogitsLossFlat | |

| Mean | 0.660807 |

| Median | 0.669797 |

| Standard Deviation | 0.031980 |

| Min | 0.550291 |

| Max | 0.724020 |

| Q1 | 0.637555 |

| Q3 | 0.688730 |

| Value | |

|---|---|

| SSIM | |

| Mean | 0.860532 |

| Median | 0.877704 |

| Standard Deviation | 0.114155 |

| Min | 0.516135 |

| Max | 1.000000 |

| Q1 | 0.775289 |

| Q3 | 0.960985 |